I've been learning a whole lot about PGMs and machine learning lately. I don't consider it straying too far from my physics roots, in light of the fact that many juicy bits of contemporary AI, such as Markov random fields or Metropolis-Hastings sampling originated in physics. This connection notwithstanding, my background doesn't give me that great of an advantage -- most of the time. A few days ago, however, I was able to apply one delicious trick I knew in order to work out the integral of a Dirichlet distribution, and I can't help sharing it here. This story has it all -- Fourier representation of the Dirac delta, Gamma functions, Laplace transforms, sandwiches, Bromwiches -- and yet it all fits into a pretty simple narrative.

Our story starts on a stormy summer night, with our protagonist grappling with the following question: how on Earth do you take this integral?

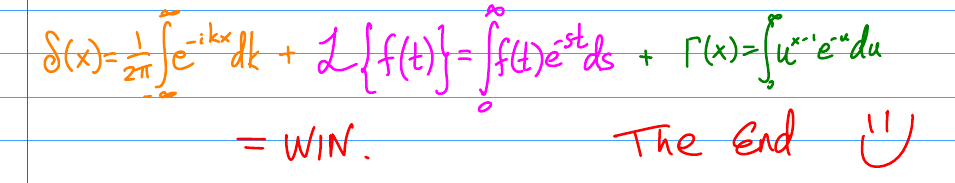

Variables  here are multinomial parameters, and thus must be non-negative and sum to 1. In a valiant (but ultimately futile) effort to avoid doing this integral by myself, I found this blog post -- which was a good start, and would allow me to casually drop terms like "integration over a simplex" or "k-fold Laplace convolution". But it turns out that we can get away with something much simpler than this simplex business. When faced with some constraint in an integrand, a physicist's instinct is to express it as a Dirac delta and integrate right over it. For the problem above, this approach works like magic! Without giving too much away, here's the punchline:

here are multinomial parameters, and thus must be non-negative and sum to 1. In a valiant (but ultimately futile) effort to avoid doing this integral by myself, I found this blog post -- which was a good start, and would allow me to casually drop terms like "integration over a simplex" or "k-fold Laplace convolution". But it turns out that we can get away with something much simpler than this simplex business. When faced with some constraint in an integrand, a physicist's instinct is to express it as a Dirac delta and integrate right over it. For the problem above, this approach works like magic! Without giving too much away, here's the punchline:

Want to know more? Because I've been on a Xournal/youtube binge lately, I narrated this derivation and put it up for the world to see. Here are the "slides" and below is the actual video. Enjoy!

One Response

Stay in touch with the conversation, subscribe to the RSS feed for comments on this post.

nice job!! The video is entertaining as well. :)