Tweak of the day:

Problem: Cygwin doesn't support UTF-8, which leads to issues with unicode filenames (e.g. when running backups).

Solution: for Cygwin, download the patch from http://www.okisoft.co.jp/esc/utf8-cygwin/

in .bashrc:

export LC_CTYPE=en_US.UTF-8

export LANG=en_US.UTF-8

alias ls='ls --show-control-chars'

Terminal: mrxvt doesn't support unicode, but urxvt does. If urxvt is not using unicode fonts by default (or the font is ugly), figure out which fonts are unicode by doing xlsfonts | grep iso10646 and put one of those fonts into .Xdefaults in the form:

URxvt*font: -misc-dejavu sans mono-medium-r-normal--*-120-*-*-m-0-iso10646-1

Don't forget to re-read .Xdefaults using xrdb < ~/.Xdefaults.

Bug of the day: sometimes my Emacs responds to C-k by performing winner-undo. Version 22.3 did that sometimes, 23 does that more often. Bizarre.

Annoyance Tweak #2 of the day: Emacs page down and then page up does not return the cursor to the same location. Annoying if page-downs are accidental. (Turns out that there's a simple fix: (setq scroll-preserve-screen-position t). Incidentally, in the Usenet thread discussing this, another useful trick came up: C-u C-SPC pops the mark. So, for a quick-and-dirty bookmark, one could use C-SPC or C-SPC-g to set and C-u-SPC to jump back!)

"Well, this wasted my time" of the day: I aliased grep to produce a more colorful and informative output. Some time later my ssh agent broke -- it kept asking for my password. Took me some time to connect the two, but what happened was: the ssh agent script is invoked via source, meaning aliased commands are used -- meaning it was getting unexpected output from my grep. Clever fix: replace grep with `which grep` -- this effectively bypasses bash aliasing. Cleverer fix: don't alias things that are important and are likely to be found in scripts.

"Makes my life easier" of the day: with Firefox 3.5 you can set gmail to handle mailto: links. Very handy -- no more stupid Outlook popups. Details at http://googlesystem.blogspot.com/2009/06/set-gmail-as-default-email-client-in.html

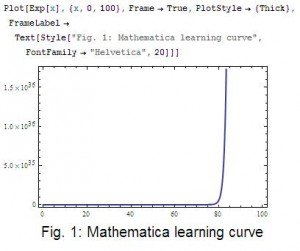

I think the main problem with Mathematica is very simple: the Notebook interface tries to be user-friendly, and tries to enable literate programming. It makes doing straightforward calculations very easy. However, this belies the fact that Mathematica is an extremely complex system, and it requires a great amount of mathematical sophistication to understand how it works. Anybody that's not a mathematician or a computer scientist by trade (or by calling) runs head first into the exponential learning curve (Fig .1). I don't know anybody in my scientific community (Applied Physics) that is proficient with Mathematica beyond the basics they need for quick plots and integrals.

I think the main problem with Mathematica is very simple: the Notebook interface tries to be user-friendly, and tries to enable literate programming. It makes doing straightforward calculations very easy. However, this belies the fact that Mathematica is an extremely complex system, and it requires a great amount of mathematical sophistication to understand how it works. Anybody that's not a mathematician or a computer scientist by trade (or by calling) runs head first into the exponential learning curve (Fig .1). I don't know anybody in my scientific community (Applied Physics) that is proficient with Mathematica beyond the basics they need for quick plots and integrals.![\int | F^{-1}\{T(k)\} | /\mathrm{max}[|F^{-1}\{T(k)\}|]dx](http://dnquark.com/blog/wp-content/plugins/latex/cache/tex_955b2241af3f8216fb1867238479a617.gif)